frogy: subdomain enumeration script

frogy – subdomain enumeration script

My goal is to create an open-source Attack Surface Management solution and make it capable to find all the IPs, domains, subdomains, live websites, login portals for one company.

How it can help a large company (Some use-cases):

- Vulnerability management team: Can use the result to feed into their known and unknown assets database to increase their vulnerability scanning coverage.

- Threat intel team: Can use the result to feed into their intel DB to prioritize proactive monitoring for critical assets.

- Asset inventory team: Can use the result to keep their asset inventory database up-to-date by adding new unknown assets facing the Internet and finding contact information for the assets inside your organization.

- SOC team: Can use the result to identify what all assets they are monitoring vs. not monitoring and then increase their coverage slowly.

- Patch management team: Many large organizations are unaware of their legacy, abandoned assets facing the Internet; they can utilize this result to identify what assets need to be taken offline if they are not being used.

It has multiple use cases depending on your organization’s processes and technology landscape.

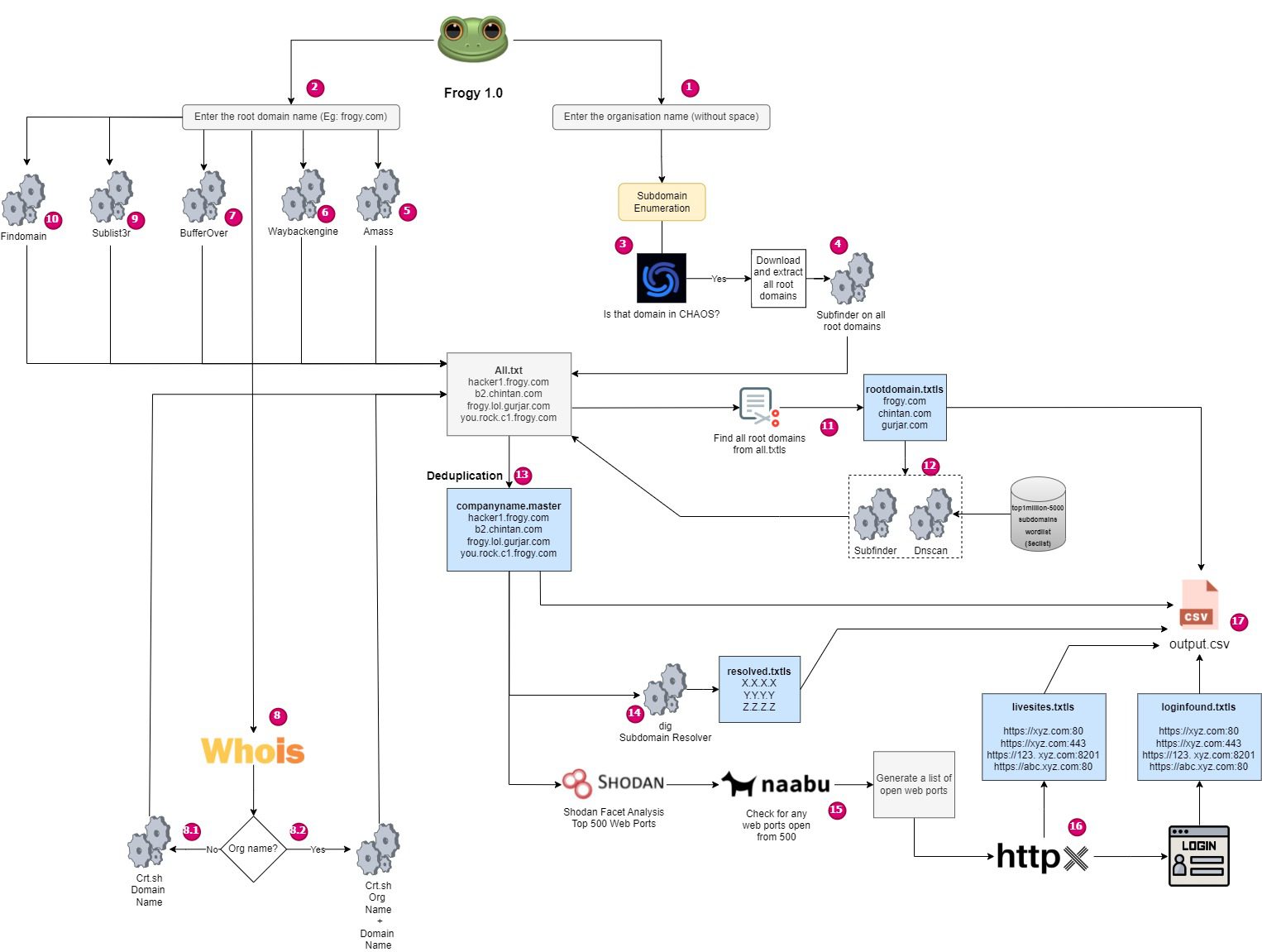

Logic

Features

- 🐸 Horizontal subdomain enumeration

- 🐸 Vertical subdomain enumeration

- 🐸 Resolving subdomains to IP

- 🐸 Identifying live web applications

- 🐸 Identifying web applications with login portals enabled

- ✔️ Efficient folder structure management

- ✔️ Resolving subdomains using dig

- ✔️ Add dnscan for extended subdomain enum scope

- ✔️ Eliminate false positives.

- ✔️ Bug Fixed, for false-positive reporting of domains and subdomains.

- ✔️ Searching domains through crt.sh via registered organization name from WHOIS instead of domain name created some garbage data. The filtered result only grabbed domains and nothing else.

- ✔️ Now finds live websites on all standard/non-standard ports.

- ✔️ Now finds all websites with login portals. It also checks the website’s home page that redirects to the login page automatically upon opening.

- ✔️ Now finds live web application based on top 1000 shodan http/https ports through facet analysis. Uses Naabu for fast port scan followed by httpx. (Credit: @nbk_2000)

- ✔️ Generate CSV (Root domains, Subdomains, Live sites, Login Portals)

- ✔️ Now provides output for resolved subdomains

- ✔️ Added WayBackEngine support from another project

- ✔️ Added BufferOver support from another project.

- ✔️ Added Amass coverage.

Install

Requirements

Go Language, Python 3.+, jq

Install

git clone https://github.com/iamthefrogy/frogy.git

chmod +x install.sh

./install.sh

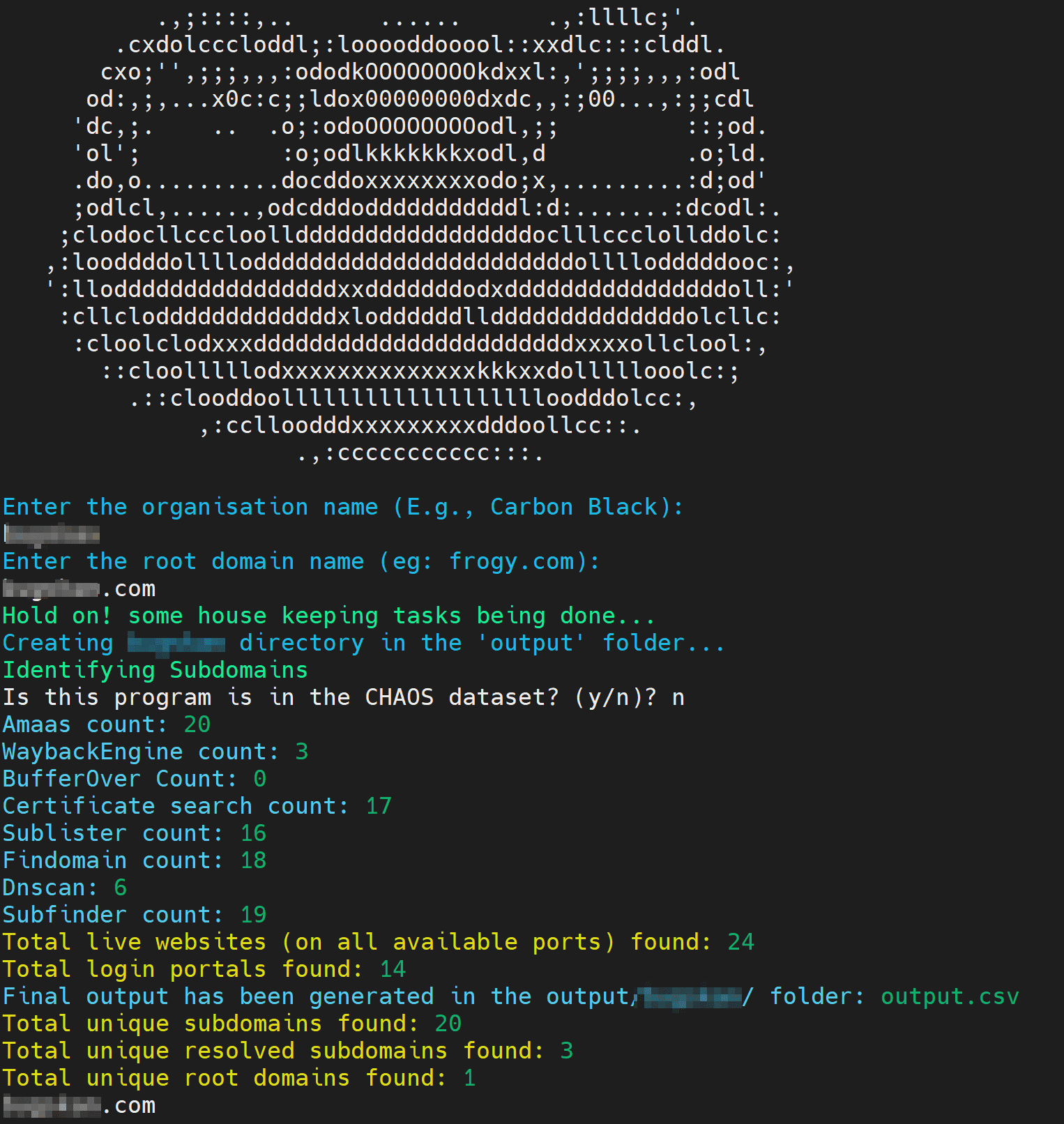

Use

./frogy.sh

Output

The output file will be saved inside the output/company_name/outut.csv folder. Where company_name is any company name that you give as an input to ‘Organization Name’ at the start of the script.

Source: https://github.com/iamthefrogy/