S3Scanner v3.0.4 releases: Scan for open S3 buckets and dump

S3Scanner

A tool to find open S3 buckets in AWS or other cloud providers:

- AWS

- DigitalOcean

- DreamHost

- GCP

- Linode

- Custom

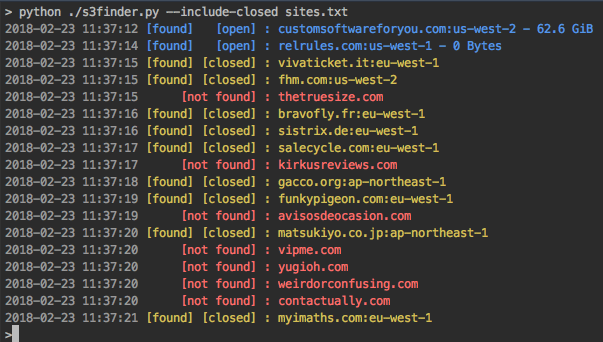

The tool takes in a list of bucket names to check. Found S3 buckets are output to file. The tool will also dump or list the contents of ‘open’ buckets locally.

Features

- ⚡️ Multi-threaded scanning

- 🔭 Supports many built-in S3 storage providers or custom

- 🕵️♀️ Scans all bucket permissions to find misconfigurations

- 💾 Save results to Postgres database

- 🐇 Connect to RabbitMQ for automated scanning at scale

- 🐳 Docker support

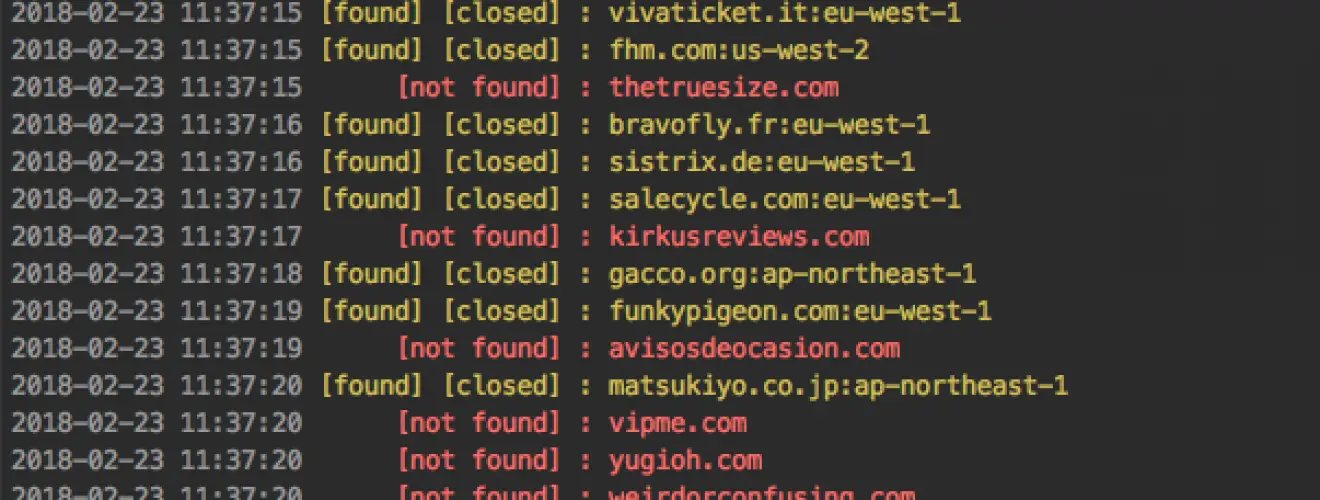

Interpreting Results

This tool will attempt to get all available information about a bucket, but it’s up to you to interpret the results.

Possible permissions for buckets:

- Read – List and view all files

- Write – Write files to bucket

- Read ACP – Read all Access Control Policies attached to bucket

- Write ACP – Write Access Control Policies to bucket

- Full Control – All above permissions

Any or all of these permissions can be set for the 2 main user groups:

- Authenticated Users

- Public Users (those without AWS credentials set)

- Individual users/groups (out of scope of this tool)

What this means: Just because a bucket doesn’t allow reading/writing ACLs doesn’t mean you can’t read/write files in the bucket. Conversely, you may be able to list ACLs but not read/write to the bucket

Changelog v3.0.4

Installation

Go

# replace version with latest release

go install -v github.com/sa7mon/s3scanner@v3.0.1

# or

go install -v github.com/sa7mon/s3scanner@latest

Docker

docker run –rm -it ghcr.io/sa7mon/s3scanner:latest -bucket my-bucket

Build from source

git clone git@github.com:sa7mon/S3Scanner.git && cd S3Scanner

go build -o s3scanner .

./s3scanner -bucket my-bucket

Using

S3Scanner can scan and dump buckets in S3-compatible APIs services other than AWS by using the –endpoint-url argument. Depending on the service, you may also need the –endpoint-address-style or –insecure arguments as well.

Copyright (c) 2019 Dan Salmon

Source: https://github.com/sa7mon