deepsecrets v1.1.2 releases: a better tool for secret scanning

DeepSecrets – a better tool for secret scanning

Yet another tool – why?

Existing tools don’t really “understand” code. Instead, they mostly parse texts.

DeepSecrets expands classic regex-search approaches with semantic analysis, dangerous variable detection, and more efficient usage of entropy analysis. Code understanding supports 500+ languages and formats and is achieved by lexing and parsing – techniques commonly used in SAST tools.

DeepSecrets also introduces a new way to find secrets: just use hashed values of your known secrets and get them found plain in your code.

Under the hood

There are several core concepts:

- File

- Tokenizer

- Token

- Engine

- Finding

- ScanMode

File

Just a pythonic representation of a file with all needed methods for management.

Tokenizer

A component able to break the content of a file into pieces – Tokens – by its logic. There are four types of tokenizers available:

FullContentTokenizer: treats all content as a single token. Useful for regex-based search.PerWordTokenizer: breaks given content by words and line breaks.LexerTokenizer: uses language-specific smarts to break code into semantically correct pieces with additional context for each token.

Token

A string with additional information about its semantic role, corresponding file, and location inside it.

Engine

A component performing secrets search for a single token by its own logic. Returns a set of Findings. There are three engines available:

RegexEngine: checks tokens’ values through a special rulesetSemanticEngine: checks tokens produced by the LexerTokenizer using additional context – variable names and valuesHashedSecretEngine: checks tokens’ values by hashing them and trying to find coinciding hashes inside a special ruleset

Finding

This is a data structure representing a problem detected inside code. Features information about the precise location inside a file and a rule that found it.

ScanMode

This component is responsible for the scan process.

- Defines the scope of analysis for a given work directory respecting exceptions

- Allows declaring a

PerFileAnalyzer– the method called against each file, returning a list of findings. The primary usage is to initialize necessary engines, tokenizers, and rulesets. - Runs the scan: a multiprocessing pool analyzes every file in parallel.

- Prepares results for output and outputs them.

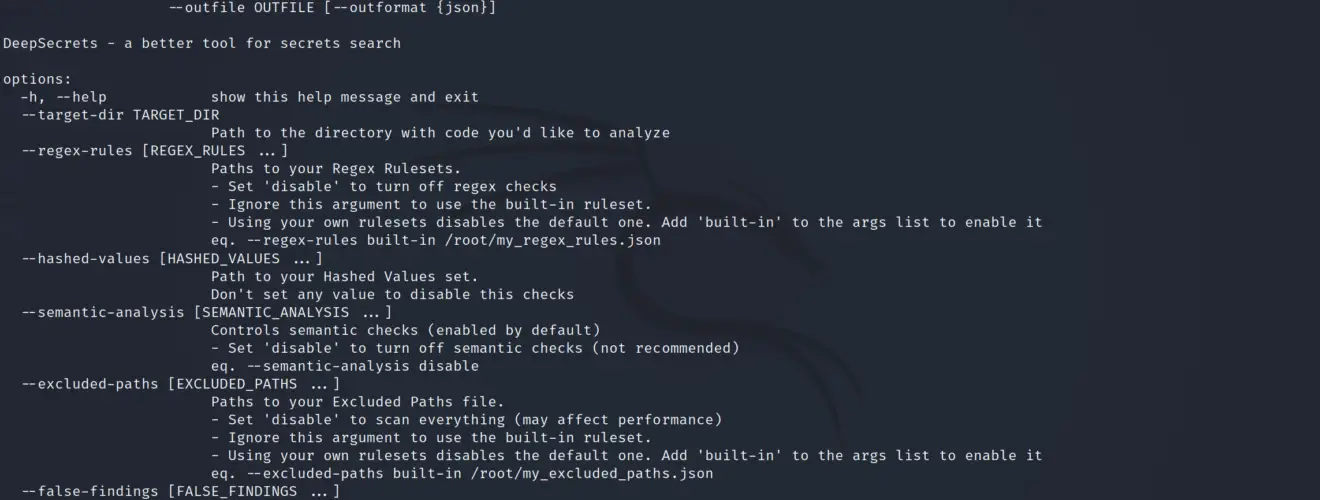

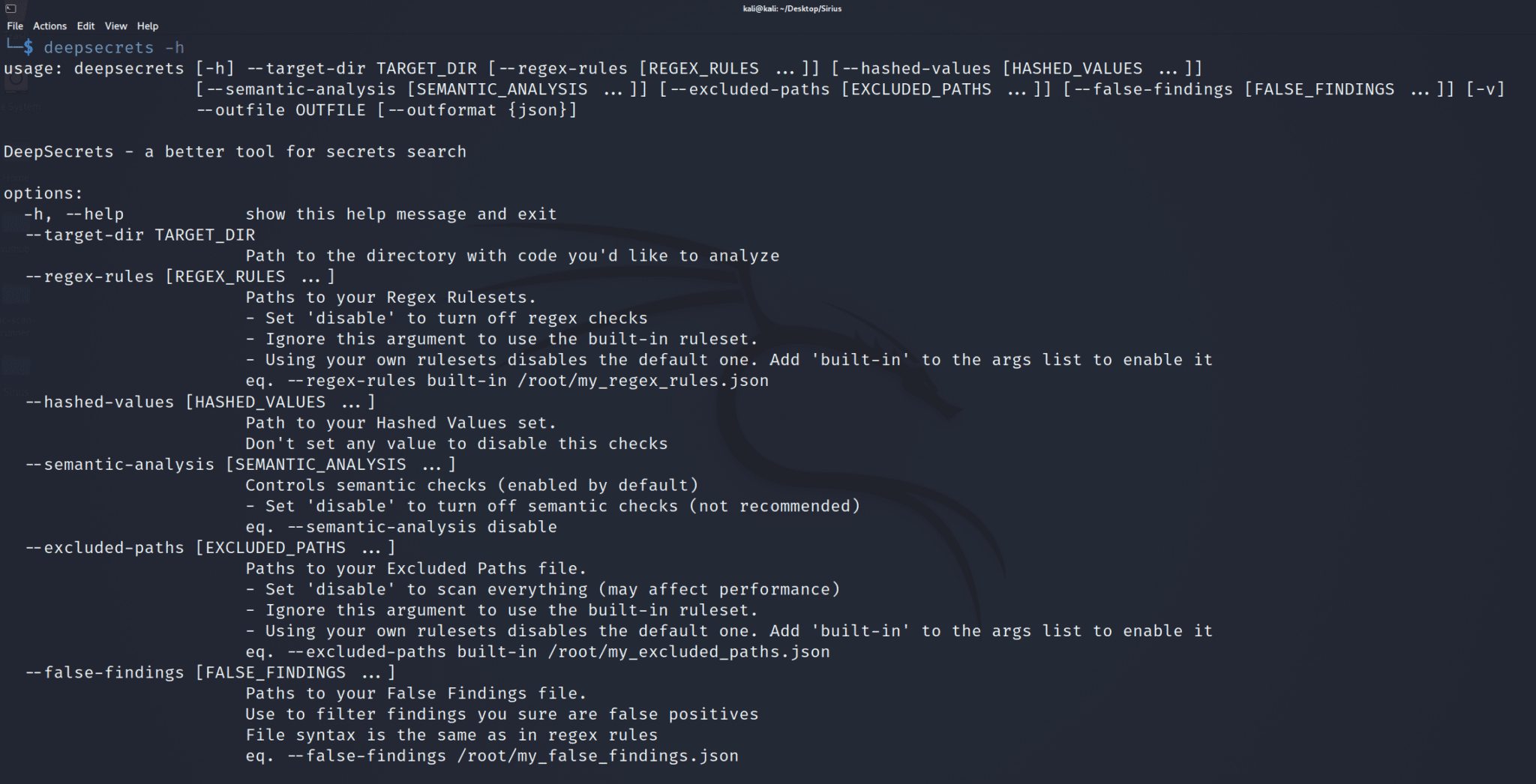

The current implementation has a CliScanMode built by the user-provided config through the cli args.

Changelog v1.1.2

- Fix extreme false positive rate in specific swift constructions

Install & Use

Copyright (c) 2023 Avito