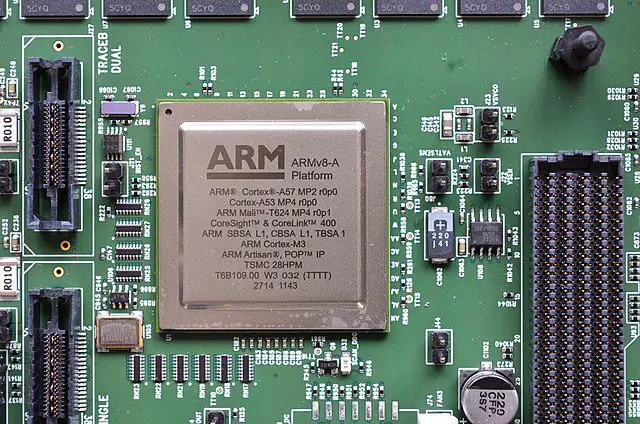

At CES 2026, Arm positioned its “Computing Platform” at the vanguard of technological discourse, offering profound insights into the post-AI epoch. The firm characterized 2025 as a pivotal watershed moment, asserting that by 2026, artificial intelligence has transcended laboratory experimentation to become an omnipresent force woven into the fabric of daily existence. This industrial metamorphosis is propelled by a dual-engine synergy: “Physical AI” and “Edge AI.”

Arm elucidated that these two paradigms are converging with unprecedented velocity. Physical AI endows autonomous vehicles and robotics with the cognitive capacity to interpret and navigate the physical world safely, while Edge AI migrates computational intelligence from the cloud to the periphery, ensuring instantaneous latency and uncompromising privacy. For instance, robotics now refine their capabilities through digital twin simulations, XR peripherals have evolved into sophisticated training environments, and wearable technology anticipates user requirements with predictive precision. These innovations rely fundamentally on the Arm platform’s hallmark of high performance coupled with low power consumption, enabling a seamless “Perception-Inference-Execution” feedback loop.

In the automotive sector, Arm emphasized a transition from “Software-Defined Vehicles” (SDV) to “AI-Defined Platforms.” Exemplifying this shift, Rivian’s proprietary autonomous driving platform utilizes custom silicon predicated on Arm architecture. Simultaneously, Tesla’s next-generation AI5 chip—also built upon the Arm framework—delivers a staggering 40-fold performance increase over its predecessor, underscoring the critical necessity of energy efficiency for physical AI. Furthermore, the NVIDIA DRIVE Thor platform provides the computational bedrock for WeRide’s Level 4 Robotaxis and autonomous pioneers such as Nuro and Wayve.

The robotics showcase emerged as a centerpiece of this year’s CES, featuring Deep Robotics’ wheeled-legged machines, Roborock’s domestic cleaners, and Agility Robotics’ humanoid forms. These entities demonstrated advanced autonomous navigation and intricate operational dexterity, largely powered by Arm-based modules like the NVIDIA Jetson Thor. In the realm of personal computing, “Windows on Arm” has attained mainstream ubiquity, with over 100 new models anticipated this year. This architecture allows devices—such as the Xiaomi Pad 7 Ultra—to maintain exceptional battery longevity while executing sophisticated on-device AI tasks, including real-time translation and image synthesis, independent of cloud connectivity.

Notably, the NVIDIA DGX SparkAI workstation, featuring the GB10 superchip with 20 integrated Arm cores, empowers developers to execute large-scale models with 1200 billion parameters directly at their desks. The superiority of Edge AI is equally manifest in wearables; the latest Ray-Ban Meta smart glasses, paired with neural-control wristbands, facilitate spatial AI within stringent power constraints, while the Oura Ring 4 provides continuous, granular health analytics.

Regarding the smart home ecosystem, heightened privacy concerns and the adoption of the Matter standard have catalyzed a return to localized processing. Hubs from Google Nest, alongside smart displays from Samsung and LG, utilize Arm processors to manage voice command and automation workflows locally, mitigating the risks of cloud-based data transmission. Arm concludes that future intelligence will be seamlessly embedded across the entire technological landscape, with its architecture serving as the foundational cornerstone for this era of “ubiquitous intelligence.”

Related Posts:

- Meta is Building an “Android of Robotics” to Power the Next Generation of Humanoid AI

- The Robotics Revolution: NVIDIA Unveils Physical AI and the Jetson T4000 at CES 2026

- Brain Meets Brawn: Boston Dynamics and Google DeepMind Unite for the New Atlas

- Tesla’s Optimus Robot Production Woes: Hands-On Challenges Stall Elon Musk’s Ambitious 2025 Target

Support Our Threat Intelligence

If you find our CVE report and cybersecurity news helpful, consider supporting our work.

Buy Me a Coffee

Buy Me a Coffee