ossem-power-up: assess data quality, built on top of the awesome OSSEM

OSSEM

A tool to assess data quality, built on top of the awesome OSSEM project.

Mission

- Answer the question: I want to start hunting ATT&CK techniques, what log sources and events are more suitable?

- Create transparency on the strengths and weaknesses of your log sources

- Provide an easy way to evaluate your logs

OSSEM Power-up Overview

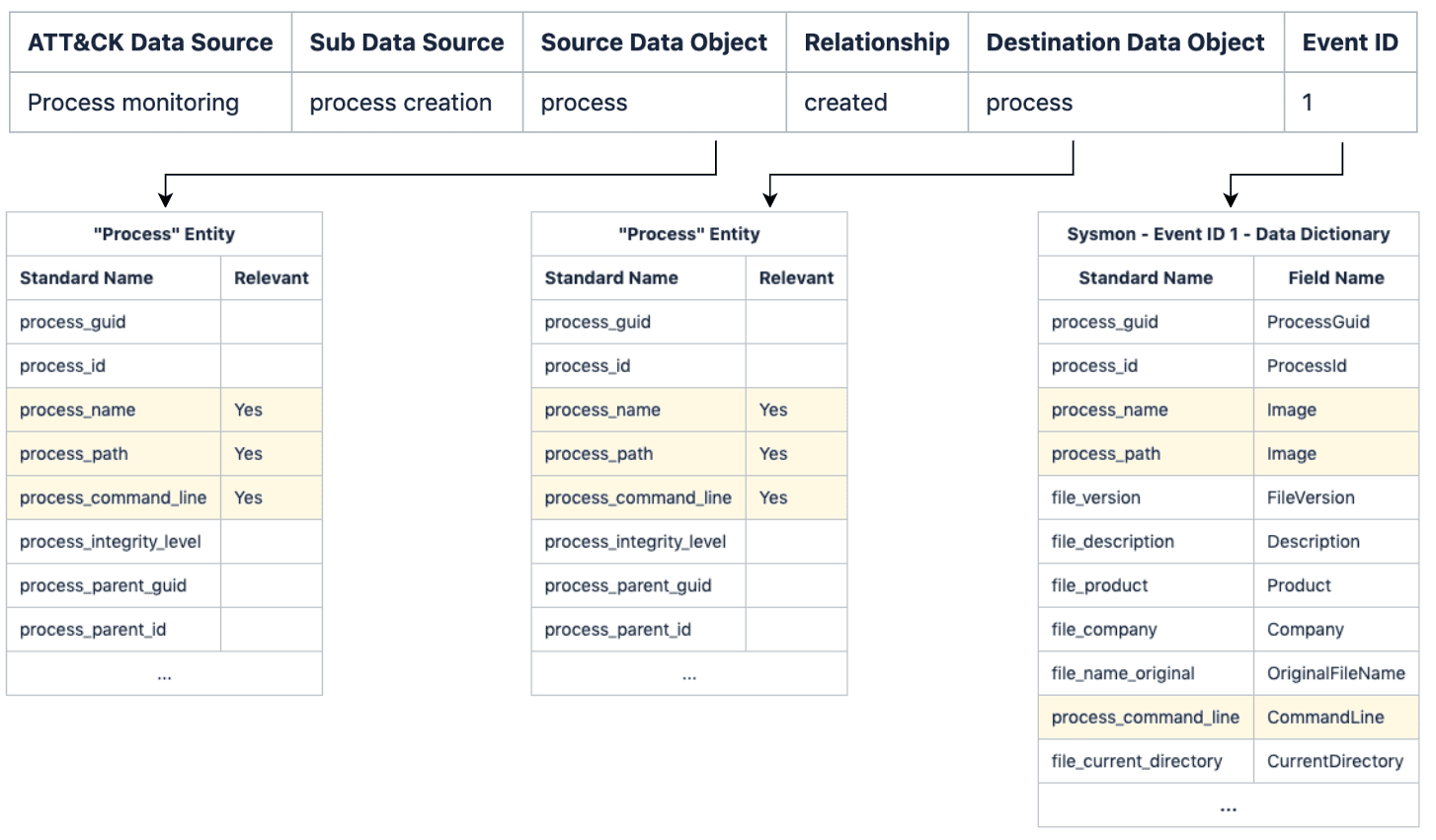

Power-up uses OSSEM Detection Data Model (DDM) as the foundation of its data quality assessment. The main reason for this is because it provides a structured way to correlate ATT&CK Data Sources, Common information model entities (CIM), and Data Dictionaries (events) with each other.

For those unfamiliar the DDM structure, here is a sample:

| ATT&CK Data Source | Sub Data Source | Source Data Object | Relationship | Destination Data Object | EventID |

|---|---|---|---|---|---|

| Process monitoring | process creation | process | created | process | 4688 |

| Process monitoring | process creation | process | created | process | 1 |

| Process monitoring | process termination | process | terminated | – | 4689 |

| Process monitoring | process termination | process | terminated | – | 5 |

As you can see each entry in the DDM defines a sub-data source (scope) using abstract entities like process, user, file, etc. Each of these entries also contains an event ID, where the scope applies.

In a nutshell, DDM entries play a major role in removing the complexity of raw events, by providing a scope that defines how a log source (data channels) can be consumed.

Data Quality Dimensions

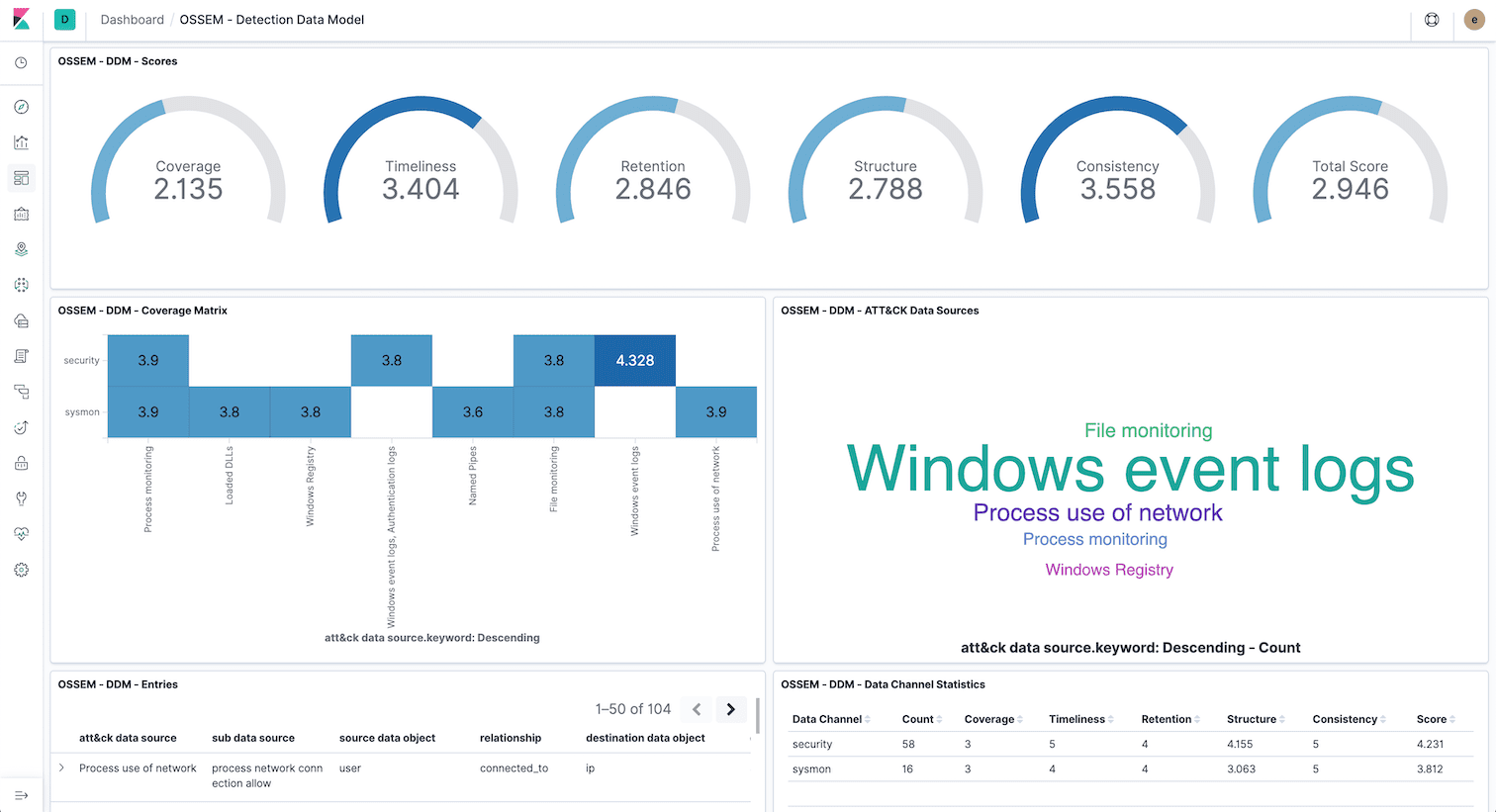

Power-up assesses data quality score according to five distinct dimensions:

| Dimension | Type | Description |

|---|---|---|

| Coverage | Data channel | How many devices or network segments are covered by the data channel |

| Timeliness | Data channel | How long does it take for the event to be available |

| Retention | Data channel | How long does the event remain available |

| Structure | Event | How complete is the event, if relevant fields are available |

| Consistency | Event | How standard is the event fields if fields have been normalized |

Every dimension is rated with a score between 0 (none) to 5 (excellent).

Coverage, Timeliness, and Retention

These dimensions are tied to data channels and propagate to all events provided by it.

Due to the nature of these dimensions, they must be rated manually, according to the specificities of the data channels.

Power-up uses resources/dcs.yml to define a data channel and rate the dimensions:

Structure

In order to calculate how complete the event structure is, power-up compares the data dictionary standard names with the fields of the entities (CIM) referenced in the DDM entry (source and destination).

Because not all entity fields are relevant (depends on the context), power-up uses the concept of profiles to select which fields need to match the data dictionary standard names. For example:

Note: There is an example profile in profiles/default.yml for you to play with.

The structure score is calculated with the following formula:

SCORE_PERCENT = (MATCHED_FIELDS / TOTAL_RELEVANT_FIELDS) * 100

For the sake of clarity, here is an example of how to structure score is calculated:

Note: Because Sysmon Event Id 1 data dictionary matches 100% of the relevant entity fields, the structure score will be rated as 5 (excelent).

The structure score is translated to the 0-5 scale in the following way:

| Percentage | Score |

|---|---|

| 0 | 0 |

| 1 to 25 | 1 |

| 26 to 50 | 2 |

| 51 to 75 | 3 |

| 76 to 99 | 4 |

| 100 | 5 |

Note: Depending on the use case (SIEM, Threat Hunting, Forensics), you can define different profiles so that you can rate your logs differently.

Consistency

To calculate consistency, power-up simply calculates the percentage of fields with a standard name in a data dictionary. Data dictionaries with a high number of fields mapped to a standard name are more likely to correlate with CIM entities.

The consistency score is calculated with the following formula:

SCORE_PERCENT = (STANDARD_NAME_FIELDS / TOTAL_FIELDS) * 100

The consistency score is translated to the 0-5 scale in the following way:

| Percentage | Score |

|---|---|

| 0 | 0 |

| 1 to 50 | 1 |

| 51 to 99 | 3 |

| 100 | 5 |